Connectivity Policies¶

Layered Security¶

What is Segmentation?¶

Wikipedia notes: “Network segmentation in computer networking is the act or practice of splitting a computer network into subnetworks, each being a network segment. Advantages of such splitting are primarily for boosting performance and improving security.”

Network segmentation has become a widely-used practice since the inception of computer networks and, in recent decades, evolved from the physical separation of Ethernet segments to the splitting of networks into logical IP subnets, and from the filtering of packet flows down to TCP/UDP ports to the mutual authorization of flow endpoints. The ability to restrict communication at the flow level paved the way for the term microsegmentation.

Mostly, the network evolution path led to the layering of segmentation techniques, not replacing one with another. On the one hand, the layered approach to segmentation has minimized the risk that a single breach might compromise the entire communication environment. On the other hand, the introduced complexity of managing multiple layers of defense has resulted in packet processing overhead and, much worse, a high risk of getting inconsistent security policy.

In the time of cloud migration and multi-platform application deployment, the approach to network segmentation is being revisited. Stretching VLANs and VPNs across clouds or computational platforms; updating ACLs on hosts and network/cloud firewalls; and employing service discovery mechanisms unaware of service reachability are not viable options for the segmentation any more. Neither is the option to confine the segmentation to a single layer of mTLS tunnels while removing all the network checkpoints between services. Instead, multi-layered segmentation is becoming a part of infrastructure-as-code practice and blending into the application CI/CD pipelines.

Segmentation in Multicloud¶

Service Connectivity Policy¶

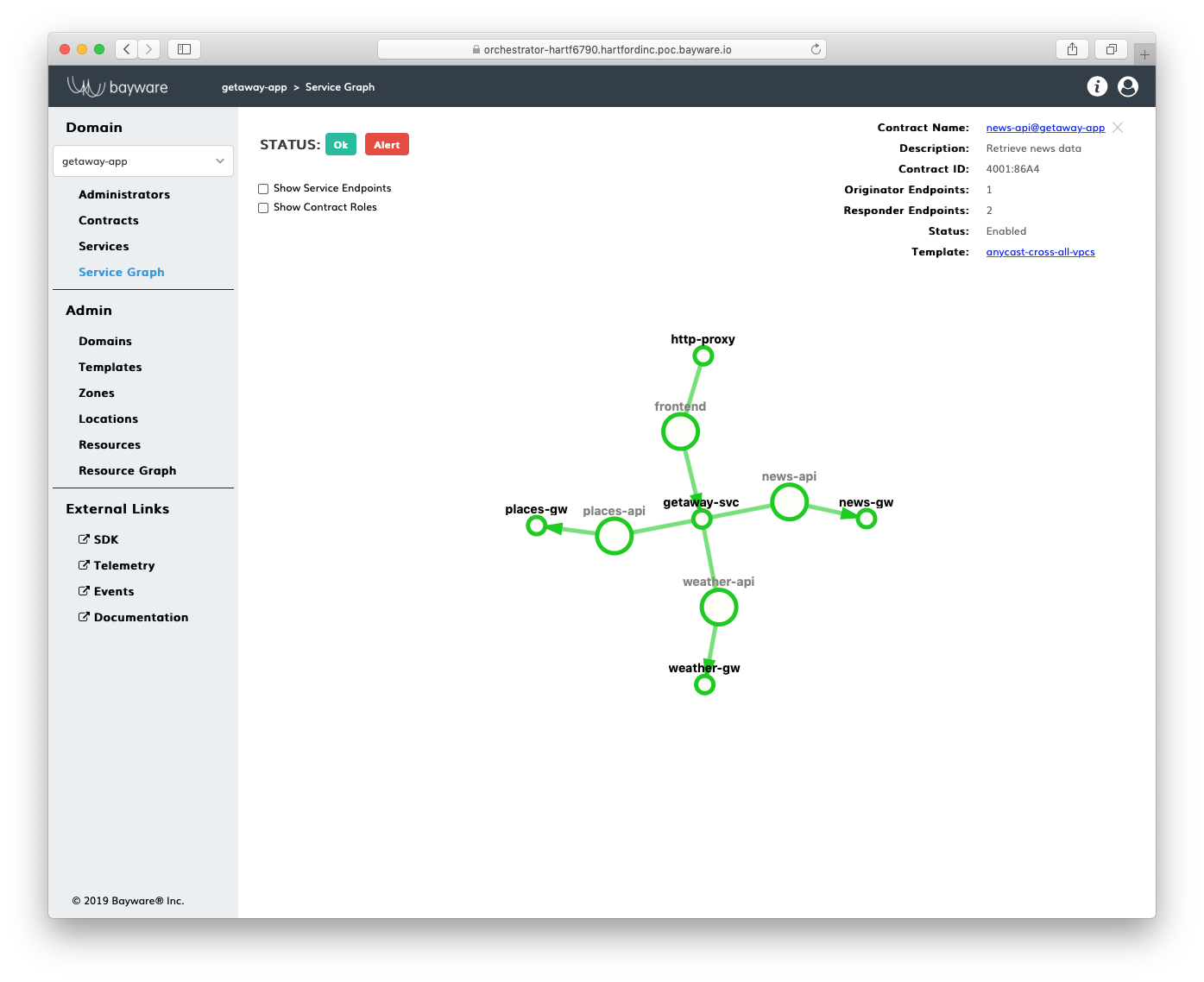

The approach to microsegmentation in the service interconnection fabric embraces the layered-security concept while enhancing it with a single source of security policy for all segmentation layers. The logical entity called service graph fully defines security segments for application services in an abstract, infrastructure-agnostic manner.

Fig. 4 Service Graph

Network connectivity between services, filtering packet flows, and mutual service discovery across various clouds and computational environments (VM and container-based) are all governed in the service interconnection fabric by the application service graph.

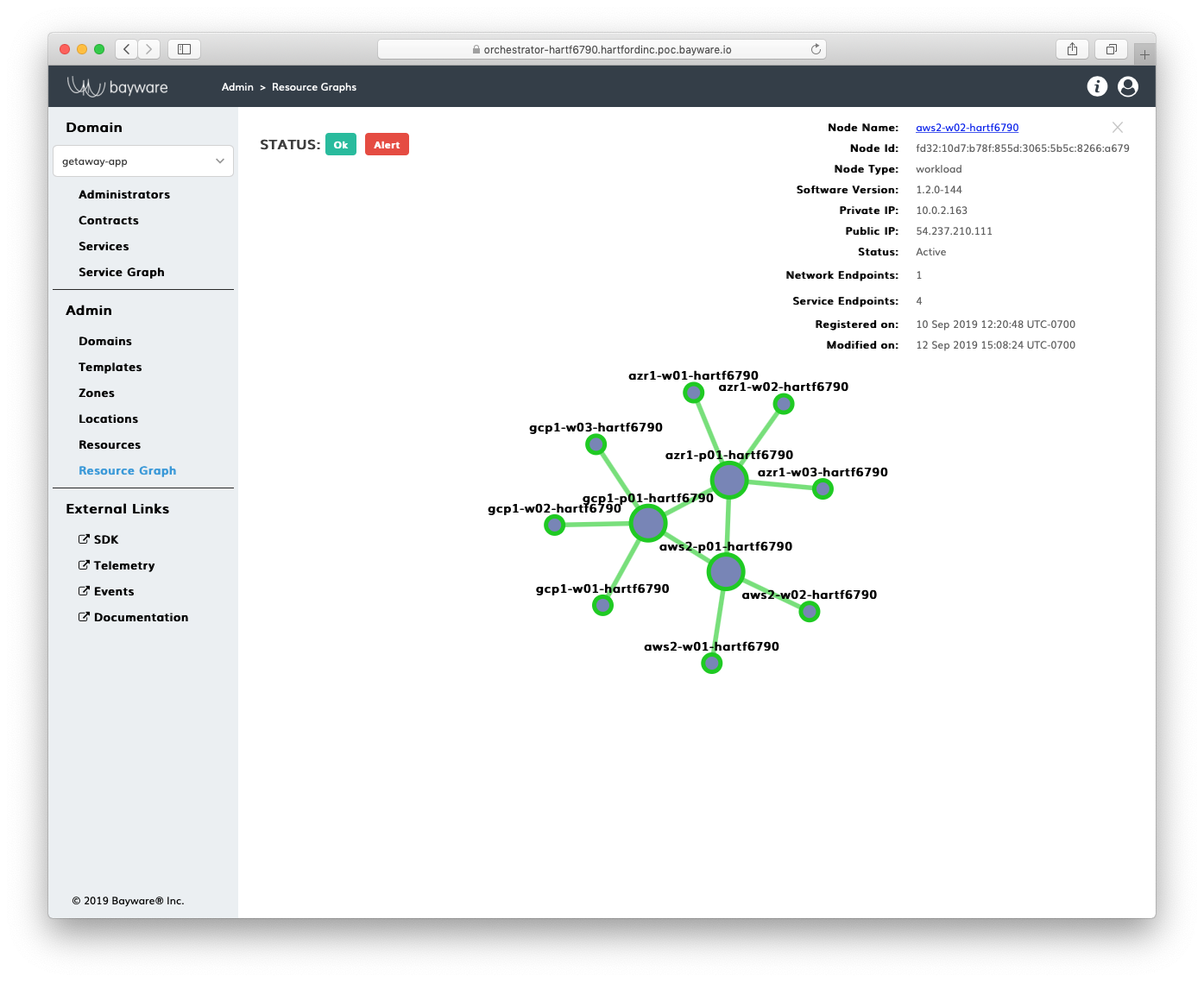

Resource Connectivity Policy¶

Segmentation at the resource layer reinforces the service segmentation. The logical entity called resource graph represents abstracted computational resources in the service interconnection fabric.

Fig. 5 Resource Graph

The resource segmentation isolates each computational resource, e.g. physical server, VM or Kubernetes worker node, from the other and ensures only the application services described in the service graph can reach each other over the top of the resource layer.

Service Connectivity Policy¶

Microsegmentation in the service interconnection fabric is based on the application service identity and communication intent, as opposed to the use of service IP/MAC addresses and associated routing/switching configuration in traditional networks. This approach allows one to define–all at once and in advance–the security policy for the application. And while the application services might eventually be dispersed across private and public clouds for both VM and container environments, the policy specification remains unchanged because it carries no infrastructure and environment dependencies.

The infrastructure-agnostic security policy uniformly governs application behavior at multiple communication layers. The service identity and communication intent determine:

- Network connectivity – reachability of the service by others in the network;

- Packet filtering – protocols and ports open for packet flows belonging to the service;

- Service discovery – capability of the service to advertise itself and find other services.

The service may have multiple roles, each defining communication intent in a different way. This allows for the service to be exposed in multiple security segments while maintaining connectivity, filtering, and discovery policies in each zone independently. The security segment is a logical entity of the service graph called contract. Only the services that become parties in opposite roles in the same contract can communicate with each other.

Network Connectivity¶

The contract determines IP reachability of one service by another in the service interconnection fabric. Only the services acquiring opposite roles to the same contract can potentially reach each other.

The contract itself might even further restrict the service reachability. As an example, the network connectivity policy might define that the opposite-role services must be within one hop from each other, in other words, in the same VPC.

Another example of the network connectivity policy is unidirectional communication. The policy might define that all services in a given role can receive data from the opposite-role services but are not allowed to send any content into the network. A UDP-streaming service crossing multiple VPCs relies on such a communication pattern.

Packet Filtering¶

Another part of the segmentation policy covered by the contract is packet filtering. In addition to packet filtering at the protocol and port level, every new packet flow must be cryptographically authorized by every policy processor before opening a connection in the network.

The packet filters at the opposite-role endpoints of the same flow mirror each other. An ingress rule for one endpoint implies an automatically-generated, opposite-role rule. The rules are synchronously applied across all clouds for all service endpoints. Moreover, the ingress and egress rules on both sides of the flow function as stateful firewalls or, more precisely, reflexive ACLs.

Flow authorization happens before protocol and port filtering. It effectively blocks all communication except the packet exchange between the opposite-role services. The flow authorization ensures the flow originator always plays the role assigned by the service graph.

Service Discovery¶

The application service in the service interconnection fabric is able to discover only the opposite-role services. Moreover, only reachable and already-authorized remote service instances appear in the local service discovery database.

The service discovery segmentation ensures the service at a given workload node can resolve only the opposite-role instances that have been authorized and proved reachable from this particular node.

As an example, the network connectivity policy might specify that communication between services be confined within a single VPC. If two pairs of opposite role service instances are deployed in two separate VPCs, every single service will discover only one instance from its own VPC.

The service discovery segmentation is fully automatic and doesn’t require adding any specification to the contract in order to be enacted.

Resource Connectivity Policy¶

The resource segmentation in the service interconnection fabric reinforces the service segmentation layer. Splitting computational resources, i.e. workload nodes, into segments offers an additional layer of security for applications running on these nodes and ensures only communication defined by the service graph are present in the fabric.

Each computational resource in the service interconnection fabric possesses an X.509 certificate as a node identity document on which all resource segmentation layers are built:

- Fabric segmentation - sandbox for application deployment with workload nodes isolated from the outside world;

- Zone segmentation - group of workload nodes within the fabric whose inbound and outbound traffic is regulated through policy;

- Workload segmentation - workload node isolation from the nodes in the same group.

The security policy for resource segmentation is infrastructure-agnostic and works the same in all clouds.

Summary¶

The service interconnection fabric offers a new approach to network segmentation in public and private clouds for both VM and container environments. The solution is designed to provide high performance and uncompromised security. The segmentation is part of the application deployment process, embedded into infrastructure-as-code rather than coming from a disconnected network configuration system. The single-source, infrastructure-agnostic policy in the form of service identity and communication intent doesn’t sacrifice the layered-security approach but governs segmentation across layers in a consistent and real-time manner.