Overview¶

Problem¶

Compute resources have become instantly available and at ever-finer levels of granularity whenever an application requests them. Various orchestration solutions facilitate those requests in private data centers and public clouds. Some solutions go even further and allow applications to seamlessly spin up compute resources across multiple clouds and various virtualization platforms.

While compute resource management has greatly improved, connectivity provisioning in a muiticloud environment, on the contrary, is not so instant, seamless, and granular. Using a declarative language, the application can set up a new workload anywhere in the world without delay, but its connectivity with other workloads will depend on third-party configuration of multiple virtual or physical middleboxes–gateways, routers, load balancers, firewalls–installed in between.

The SDN-style, configuration-centric connectivity model doesn’t allow applications to manage the communication environment in the same way as the computational environment. It is impossible for an application to declare a workload connectivity requirement (i.e., intent) one time and then have this desired connectivity applied each time a new workload instance appears in a private datacenter or a public cloud.

A pure application-level approach, in which communication intent translates into only HTTPS connections, also doesn’t work. Confining all connectivity provisioning to a layer of mTLS tunnels while removing network checkpoints between workloads oversimplifies inherent application communication environment complexities. True, multi-layered security requires enforcing application intent at multiple levels, which implies middlebox configuration is not eliminated. `

To make migration to clouds easier, faster, and more secure, the application connectivity ought to become part of the application deployment code, with communication intent expressed in an infrastructure-agnostic manner. As a result, deploying a workload instance in any cloud, on any virtualization platform would automatically bring up both compute resources and desired connectivity. No middlebox configuration would be required to establish secure connectivity between workloads in different clouds, clusters, or other trust domains.

Solution¶

The Service Interconnection Fabric (SIF) is a cloud migration platform that enforces connectivity policy and provides service discovery functions for application services packaged as containers or virtual machines and deployed in private data centers or public clouds. The SIF eliminates network configuration by capturing and applying application communication intent as a service graph that is infrastructure-agnostic and based entirely on application service topology.

The SIF is zero-trust right out of the box. An application itself defines its connectivity policy and executes it at the time of workload deployment. The execution output are ephemeral security segments in the cross-cloud network, connecting subsets of workloads together. As such, whenever a workload appears anywhere in the fabric, it automatically receives the desired connectivity with other workloads as specified by the application service graph. Zero-trust ensures no connectivity exists between workloads that was neither specified nor requested.

In the SIF, connectivity is part of application deployment code. Moreover, the fabric itself is an infrastructure-as-code component. As such, connectivity policies and fabric resources easily integrate into the application CI/CD process. Whether rolling out an entire application or just a few microservices, the required resources and connectivity come up automatically. The policy and resource code is reusable and copy-paste portable across clouds.

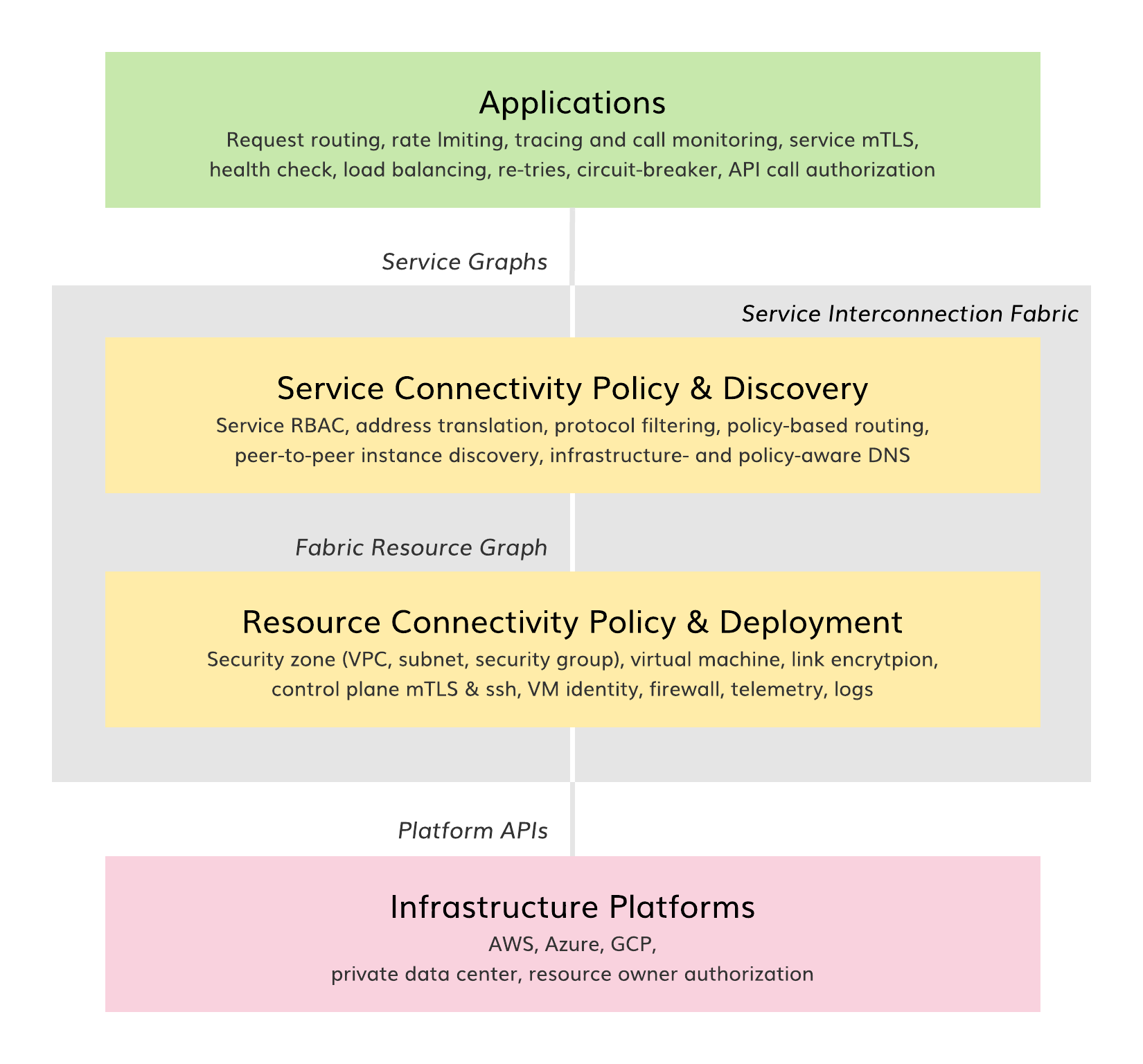

The figure above presents the SIF software layers and their integration into a broader cloud infrastructure-as-code stack. The SIF upper layer enforces application connectivity policy and provides service discovery functions. The SIF bottom layer controls resource connectivity and facilitates resource deployment. In this way, the application communication environment is fully abstracted from the underlying infrastructure platforms. This allows an application to declare communication intent one time, using service identities instead of infrastructure-dependent IP addresses.

The SIF is fast and easy to deploy. The fabric creates a complete set of identity, security, routing and telemetry services automatically based on the service graph. No SDN or VNF solutions are required to establish secure connectivity between application services in different clouds or clusters. CNIs and complete logging and telemetry are built-in and configured automatically. As such, the fabric is ideal for hybrid clouds and hybrid VM/container deployments.

Product Architecture¶

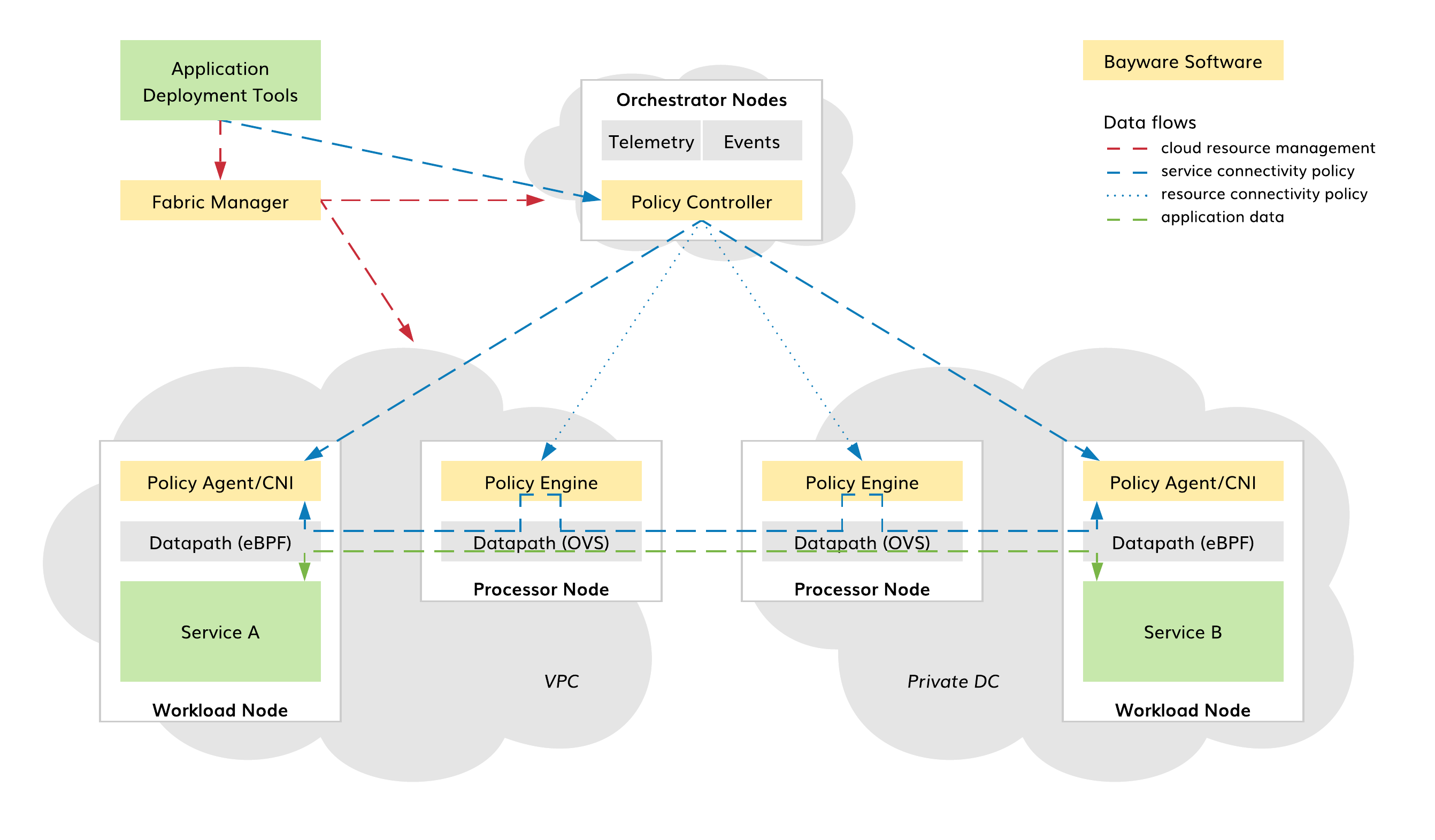

The SIF consists of Fabric Manager and three types of nodes: Orchestrator, Processor, and Workload. Fabric Manager deploys nodes across clouds. Orchestrator controls connectivity policies. Processor secures the trust domain boundaries by enforcing resource connectivity policy on orchestrator requests and service connectivity policy on workload requests. Workload provides application services with connectivity and service discovery functions in strict accordance with an application communication intent.

Fig. 2 SIF Product Architecture

Bayware software components in the SIF are as follows:

- BWCTL CLI and BWCTL-API CLI tools (Fabric Manager);

- Policy Controller (Orchestrator nodes);

- Policy Engine (Processor nodes);

- Policy Agent (Workload nodes).

All Bayware software components belong to the SIF control plane only. The application data traverses Linux kernel datapaths that are controlled by Policy Agents and Engines. The Policy Agent programs the Extended Berkeley Packet Filter (eBPF) on its workload node and communicates application communication intent to the desired Policy Engines and Agents. The Policy Engine validates connectivity requests, programs the Open Virtual Switch (OVS) on its processor node, and forwards requests.

This unique architecture allows the SIF to automatically generate, configure, and adapt secure connectivity between application services across any set of public, private or hybrid clouds. Application deployment tools communicate application intent to the SIF in a fully infrastructure-agnostic manner, and the SIF takes care of all communication settings: VPCs, subnets, security groups, node firewalls, link encryption, address translation, packet filtering, policy-based routing, and service discovery.

In summary, application connectivity becomes part of the application deployment code. While deploying an application, the required resources and connectivity come up in the SIF automatically. This ensures fast, easy, and secure cloud, multicloud, and hybrid cloud migration.